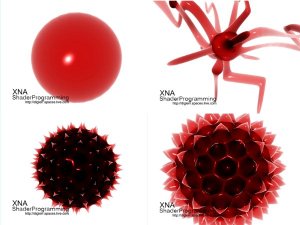

Welcome to the 14th tutorial in the XNA Shader Programming tutorial. Last time, we looked at using alpha maps and the alpha channel to make objects look transparent. Today we are going to dive a bit deeper in transparency, by implementing transmittance.

Things like glass, water, crystal, gass, air++ are things that absorb light as light-rays pass through them. In tutorial 13, we used alpha maps to make things look transparent and could create a transparent glass ball by just creating an alphamap with the color RGB(0.5,0.5,0.5) and we got ourself a transparent glassball. This approach works well in many cases, but it makes the transparency quite flat. Objects in the real world, say a glass sphere, absorb/scatters light as the light-rays pass trough them. The longer the rays are inside the glass-ball, the more light will be scattered and absorbed before comming out. This is called Transmittance( wikipedia ). To calculate the transmittance( T ), we can use Beer-Lamberts law ( wikipedia ) on the light-rays that pass trough the transmitter. Lets take a look at Beer-Lamberts law, and understand what we need calculate!

Beer-Lamberts law:

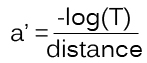

[1]

[1]where T is the transmittance, a’ is the absorbtion factor, c is the consistensy of the absorbing object and d is the thickness of the object.

So, in order to use this, we need to find a’, c and d.

Let’s start with c. c controls how much light is absorbed when traveling through the transmitter. This value can just be set to any user spesific number above 0.0.

Next is a’. We see a’ in [1], and can use that to find a’: [2]

[2]There T is the darkest transmitting color, and that is reached at distance. Finally, we got the c-variable. This variable is set to the thickness of the object, at a given point, and probably is the hardest part to get right. In this tutorial, we are calculating c quite correct for any non-complex objects( those that does not contain any holes or "arms" sticking out, like a sphere, simple glass figures and so on). The object used in this shader is a complex one, because we are going to get it right in a later tutorial, but let’s start simple! Now that we got all variables needed to calculate T at a given point, we can use this to see how much light is absorbed. This is done by multiplying the color of the light-ray( pixel behind the transmitter ) with T! So, how do we calculate the distance each light-ray is traveling through the transmitter? By using the depth buffer( wikipedia )!

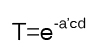

The Depth buffer( Z-Buffer ) can be thought of as a grayscale image containing the scene is black and white, where the grayscale value indicates how far away an object is from the camera. So, if you take a look at the image on top of the article, we see a complex glass object. The scenes depth buffer looks something like this:

The depth buffer needs to have correct values in the Near and Far clipping plane of the projection matrix. Preferably having Near at the closest( to the view/camera) vertex of the transmitter, and Far at the most distance vertex of the transmitter.

Backfaces in one depth texture.

Frontfaces in another depth texture

Giving us a texture that can look like this. The grayscale value indicates how far a light-ray have to travel to get through the transmitter. White is far, and black is short/nothing.

We are going to look at how to get the depth buffer and render it to a texture in the "Using the shader" section of the tutorial, but first, let’s see how to implement the shaders.

float4x4 matInverseWorld;

float4 vLightDirection;

float4 vecLightDir;

float4 vecEye;

float4 vDiffuseColor;

float4 vSpecularColor;

float4 vAmbient;

sampler ColorMapSampler = sampler_state

{

Texture = <ColorMap>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

{

float4 Pos : POSITION;

float2 Tex : TEXCOORD0;

float3 L : TEXCOORD1;

float3 N : TEXCOORD2;

float3 V : TEXCOORD3;

};

{

OUT Out = (OUT) 0;

Out.Pos = mul(Pos, matWorldViewProj);

Out.Tex = Tex;

Out.L = normalize(vLightDirection);

Out.N = normalize(mul(matInverseWorld, N));

Out.V = vecEye – Pos;

return Out;

}

{

float3 ViewDir = normalize(V);

// Calculate normal diffuse light.

float4 Color = tex2D(ColorMapSampler, Tex);

float Diff = saturate(dot(L, N));

float Specular = pow(saturate(dot(Reflect, ViewDir)), 128); // R.V^n

return Color*vAmbient + Color*vDiffuseColor * Diff + vSpecularColor * Specular;

{

pass P0

{

VertexShader = compile vs_2_0 VertexShader();

PixelShader = compile ps_2_0 PixelShader();

}

}

struct OUT_DEPTH

{

float4 Position : POSITION;

float Distance : TEXCOORD0;

};

OUT_DEPTH RenderDepthMapVS(float4 vPos: POSITION)

{

OUT_DEPTH Out;

// Translate the vertex using matWorldViewProj.

// Get the distance of the vertex between near and far clipping plane in matWorldViewProj.

Out.Distance.x = 1-(Out.Position.z/Out.Position.w);

return Out;

}

float4 RenderDepthMapPS( OUT_DEPTH In ) : COLOR

{

return float4(In.Distance.x,0,0,1);

}

technique DepthMapShader

{

pass P0

{

ZEnable = TRUE;

ZWriteEnable = TRUE;

AlphaBlendEnable = FALSE;

VertexShader = compile vs_2_0 RenderDepthMapVS();

PixelShader = compile ps_2_0 RenderDepthMapPS();

}

}

First of all, we need the Background scene texture, the transmitting objects scene( the texture contianing all of the objects that will be transmitters ) and the two depth buffer textures!

sampler D1MSampler = sampler_state

{

Texture = <D1M>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

sampler D2MSampler = sampler_state

{

Texture = <D2M>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

sampler BGSceneSampler = sampler_state

{

Texture = <BGScene>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

sampler SceneSampler = sampler_state

{

Texture = <Scene>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

float Du = 1.0f;

float C = 12.0f;

{

float4 Color=tex2D(SceneSampler, Tex);

float4 BGColor=tex2D(BGSceneSampler, Tex);

float depth1=tex2D(D1MSampler, Tex).r;

float depth2=tex2D(D2MSampler, Tex).r;

float3 a;

a.r = (-log(Color.r))/Du;

a.g = (-log(Color.g))/Du;

a.b = (-log(Color.b))/Du;

float4 T;

T.r = exp((-a.r)*C*distance)+0.000001;

T.g = exp((-a.g)*C*distance)+0.000001;

T.b = exp((-a.b)*C*distance)+0.000001;

T.w = 1;

return T*BGColor;

technique PostProcess

{

pass P0

{

// A post process shader only needs a pixel shader.

PixelShader = compile ps_2_0 PixelShader();

}

}

// Global variables

float Du = 1.0f;

float C = 12.0f;

sampler ColorMapSampler : register(s0);

sampler D1MSampler = sampler_state

{

Texture = <D1M>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

sampler D2MSampler = sampler_state

{

Texture = <D2M>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

sampler BGSceneSampler = sampler_state

{

Texture = <BGScene>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

sampler SceneSampler = sampler_state

{

Texture = <Scene>;

MinFilter = Linear;

MagFilter = Linear;

MipFilter = Linear;

AddressU = Clamp;

AddressV = Clamp;

};

float4 PixelShader(float2 Tex: TEXCOORD0) : COLOR

{

float4 Color=tex2D(SceneSampler, Tex);

float4 BGColor=tex2D(BGSceneSampler, Tex);

float depth1=tex2D(D1MSampler, Tex).r;

float depth2=tex2D(D2MSampler, Tex).r;

float distance = ((depth2-depth1));

float3 a;

a.r = (-log(Color.r))/Du;

a.g = (-log(Color.g))/Du;

a.b = (-log(Color.b))/Du;

T.r = exp((-a.r)*C*distance)+0.000001;

T.g = exp((-a.g)*C*distance)+0.000001;

T.b = exp((-a.b)*C*distance)+0.000001;

T.w = 1;

}

{

pass P0

{

// A post process shader only needs a pixel shader.

PixelShader = compile ps_2_0 PixelShader();

}

}

DepthStencilBuffer depthSB;

RenderTarget2D depthRT2;

DepthStencilBuffer depthSB2;

Texture2D depth2Texture;

EffectTechnique environmentShader;

EffectTechnique depthMapShader;

float C = 12.0f;

// Create our render targets

PresentationParameters pp = graphics.GraphicsDevice.PresentationParameters;

renderTarget = new RenderTarget2D(graphics.GraphicsDevice, pp.BackBufferWidth, pp.BackBufferHeight, 1, graphics.GraphicsDevice.DisplayMode.Format);

depthRT = new RenderTarget2D(graphics.GraphicsDevice, pp.BackBufferWidth, pp.BackBufferHeight, 1, SurfaceFormat.Single); // 32-bit float format using 32 bits for the red channel.

depthRT2 = new RenderTarget2D(graphics.GraphicsDevice, pp.BackBufferWidth, pp.BackBufferHeight, 1, SurfaceFormat.Single); // 32-bit float format using 32 bits for

depthSB = CreateDepthStencil(depthRT, DepthFormat.Depth24Stencil8);

depthSB2 = CreateDepthStencil(depthRT2, DepthFormat.Depth24Stencil8);

private DepthStencilBuffer CreateDepthStencil(RenderTarget2D target)

{

return new DepthStencilBuffer(target.GraphicsDevice, target.Width,

target.Height, target.GraphicsDevice.DepthStencilBuffer.Format,

target.MultiSampleType, target.MultiSampleQuality);

}

{

if (GraphicsAdapter.DefaultAdapter.CheckDepthStencilMatch(DeviceType.Hardware,

GraphicsAdapter.DefaultAdapter.CurrentDisplayMode.Format, target.Format,

depth))

{

return new DepthStencilBuffer(target.GraphicsDevice, target.Width,

target.Height, depth, target.MultiSampleType, target.MultiSampleQuality);

}

else

return CreateDepthStencil(target);

}

// Get our techniques and store them in variables.

environmentShader = effect.Techniques["EnvironmentShader"];

depthMapShader = effect.Techniques["DepthMapShader"];

void DrawScene(bool transmittance)

{

// Begin our effect

effect.Begin(SaveStateMode.SaveState);

foreach (EffectPass pass in effect.CurrentTechnique.Passes)

{

// Begin current pass

pass.Begin();

{

foreach (ModelMeshPart part in mesh.MeshParts)

{

// calculate our worldMatrix..

worldMatrix = bones[mesh.ParentBone.Index] * renderMatrix;

graphics.GraphicsDevice.Vertices[0].SetSource(mesh.VertexBuffer, part.StreamOffset, part.VertexStride);

graphics.GraphicsDevice.Indices = mesh.IndexBuffer;

graphics.GraphicsDevice.DrawIndexedPrimitives(PrimitiveType.TriangleList,

part.BaseVertex, 0, part.NumVertices,

part.StartIndex, part.PrimitiveCount);

}

}

pass.End();

}

// Stop using this effect

effect.End();

}

effect.CurrentTechnique = depthMapShader;

GraphicsDevice.RenderState.CullMode = CullMode.CullClockwiseFace;

depth1Texture = RenderDepthMap(depthSB,depthRT);

effect.CurrentTechnique = depthMapShader;

GraphicsDevice.RenderState.CullMode = CullMode.CullCounterClockwiseFace;

depth2Texture = RenderDepthMap(depthSB2, depthRT2);

graphics.GraphicsDevice.SetRenderTarget(0, renderTarget);

graphics.GraphicsDevice.Clear(Color.White);

DrawScene(true);

SceneTexture = renderTarget.GetTexture();

{

GraphicsDevice.RenderState.DepthBufferFunction = CompareFunction.LessEqual;

GraphicsDevice.SetRenderTarget(0, rt2D);

DepthStencilBuffer saveSB = GraphicsDevice.DepthStencilBuffer;

GraphicsDevice.Clear(Color.Black);

GraphicsDevice.SetRenderTarget(0, null);

GraphicsDevice.DepthStencilBuffer = saveSB;

}

{

// Apply the post process shader

effectPost.Begin();

{

effectPost.CurrentTechnique.Passes[0].Begin();

{

effectPost.Parameters["D1M"].SetValue(depth1Texture);

effectPost.Parameters["D2M"].SetValue(depth2Texture);

effectPost.Parameters["BGScene"].SetValue(m_BGScene);

effectPost.Parameters["Scene"].SetValue(SceneTexture);

effectPost.Parameters["Du"].SetValue(Du);

effectPost.Parameters["C"].SetValue(C);

spriteBatch.Draw(SceneTexture, new Rectangle(0, 0, 800, 600), Color.White);

effectPost.CurrentTechnique.Passes[0].End();

}

}

effectPost.End();

}

spriteBatch.End();

You might have noticed that I have not used effect.commitChanges(); in this code. If you are rendering many objects using this shader, you should add this code in the pass.Begin() part so the changed will get affected in the current pass, and not in the next pass. This should be done if you set any shader paramteres inside the pass.

The soruce code is based on this tutorial but addes this feature.

As I promised you, I will write a tutorial that covers this in depth, but this gives you an idea on how to solve it.

Basically, what he is doing, is to first render the two depth buffers as I do it in this tutorial( Using CompareFunction.LessEqual ), but in addition, Martin is making two new depth render targets, but instead of LessEqual, he uses GreaterEqual to calculate a new distance( from behind ) and adding this to the objects depth.

The source can be found here: Executable + Source

What he is doing is to compute the transmission specially for each object. This would allow you to have specialized Du and C components for each object and also reduces the memory usage on the GPU, as well as the pixel overdraw which comes from computing transmission as a fullscreen quad. He has modified the 2-layer transmission approach to include these features. Now, only three render-targets are created in total (1 for near-back face depth, the other 2 for far depth) and each are of half-single (16-bit) precision. The position reconstruction technique works excellently with this. Also, the benefit of doing transmission outside of a post-process lets you have specialized parameters for each object.

Fantastic tutorial!

I\’m glad you liked it, and thank you for the previous comments as well :)I will add a last tutorial on the transmittance parts when I get the correct depth calculation!

This code only really works for convex objects. Wherever an objects overlaps itself things go wrong :/My suggested simple fix, which I imagine would work for most objects would be to render the object with transmittance twice and change the depth CompareFunction, first render the object with CompareFunction.GreaterEqual when calculating the depth values to get the "back" of the object, and then use CompareFunction.LessEqual to get the front of the object.You might have to do something to make sure that area that *don\’t* overlap don\’t get drawn twice and are twice as coloured.

Thank you for the comment. As we dicussed over e-mail, I will add you example to the tutorial!

Petri,Excellent tutorial! It would be neat to try applying other optics principles using this approach. However, there are a few improvements that could be made to this. For one, acquiring depth using a projection-method (dividing z by w) yields a non-linear depth value. If you use this same depth value for transmission calculations, that\’s going to give strange results at varying distances or along triangles which extend past the camera\’s view (imagine a very large plane past the camera\’s view-the interpolated distance in the vertex shader will yield funky results). Secondly, for accurate transmission, one would need to compute the distances in world-space. Having a camera-based value isn\’t accurate. Also, I believe considerably less texture memory could be used. In this example and the two layered transmittance example, two render targets at 32-bit depth are allocated for the lesser-depth front and back faces of transmissive geometry. Along with this, a render target is allocated to fit the color data of any transmissive geometry. The transmission effect occurs as post-process with constants defining the Du and C components. This certainly isn\’t a bad approach, but a far better one would be to compute transmission specially for each object. This would allow you to have specialized Du and C components for each object and also reduces the memory usage on the GPU, as well as the pixel overdraw which comes from computing transmission as a fullscreen quad. Matt Vitelli

Hi, all download links from your darkcodex website are no longer available, could you upload your files somewhere else please ?

Thanks.

I enjoyedd reading this